Home » The role of Azure Data Factory for Effective Data management

Whether you’re leading a large-scale corporation or a small startup, efficient data management is essential to your success. Your company’s data is an essential element that drives all your everyday operations, interactions with clients, and decision-making. Having control over your data is essential for managing inventories, tracking customer preferences, and assessing sales patterns among the everyday chaos of the company. Every company, regardless of size, craves a revolutionary tool or process to manage its data with ease and turn it into more than just words and figures. Data is the foundation of well-informed decisions and efficient operations. Azure Data Factory is one of those useful tools for efficiently managing and organizing your data and transforming it into insights that propel your company forward.

Azure Data Factory is a powerhouse when it comes to data management. It serves as a robust cloud-based data integration service on the Microsoft Azure platform. Whether you’re dealing with small-scale or large-scale data, Azure Data Factory enables you to orchestrate and automate complex data workflows.

Azure Data Factory is a powerhouse when it comes to data management. It serves as a robust cloud-based data integration service on the Microsoft Azure platform. Whether you’re dealing with small-scale or large-scale data, Azure Data Factory enables you to orchestrate and automate complex data workflows.

This versatile tool facilitates the seamless movement and transformation of data across various sources and destinations. It’s not just about moving data around; Azure Data Factory ensures that your data is transformed, cleaned, and organized in a way that makes it valuable for analysis and reporting.

With its smooth Azure Data Factory Data Flow, Azure Data Factory offers a centralized platform for connecting, gathering, converting, and enriching your data—regardless of whether it’s structured or unstructured, on-premises or in the cloud. Because of its adaptability, it can be integrated with a variety of data sources, which makes it an essential tool for managing your data during its whole lifecycle.

From building Azure data factory pipelines to supporting continuous integration and deployment (CI/CD) through Azure DevOps and GitHub, Azure Data Factory streamlines data management processes. It also offers monitoring capabilities to keep a close eye on the success and failure rates of your data workflows.

Get free Consultation and let us know your project idea to turn into an amazing digital product.

From advanced data movement strategies to continuous integration and deployment methodologies, with the Azure Data Factory architecture, you can leverage analytics engines and employ cutting-edge transformation techniques, elevating raw data into actionable insights.

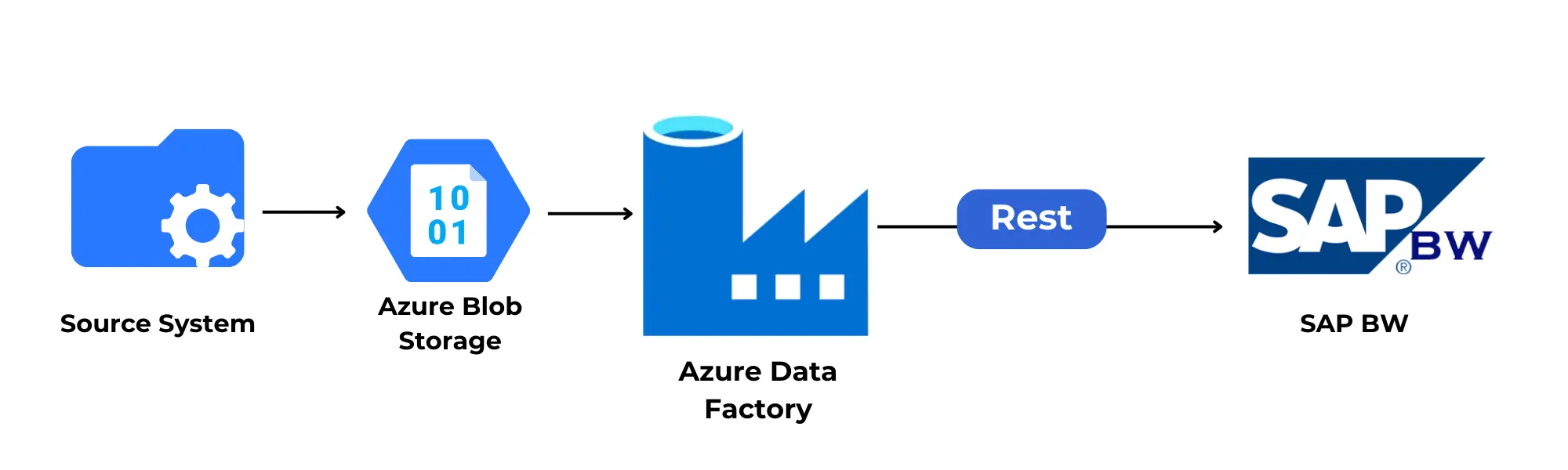

Azure Data Factory begins its operation by establishing connections to diverse data sources. This includes seamless integration with SaaS services, file-sharing platforms, and various online services. The platform acts as a dynamic bridge, ensuring a comprehensive reach across different types of data.

azure data factory schedule helps users to design meticulous data pipelines. These pipelines serve as organized workflows, defining the flow of data from source to destination. One notable feature is the flexibility in scheduling—operations can be set to run at specific intervals or as one-time events, providing adaptability to diverse business needs.

This crucial component excels at efficiently transferring data from both on-premises and cloud sources to a centralized data store. This strategic move lays the groundwork for subsequent analysis and processing, ensuring data is centralized for meaningful insights.

Azure Data Factory distinguishes itself with adaptive operational modes. Whether opting for scheduled executions or one-time operations, the platform seamlessly adjusts to the chosen mode. This adaptability ensures that the data movement aligns precisely with the unique operational requirements of each business scenario.

The platform goes beyond mere data movement; it embraces comprehensive CI/CD methodologies. Integrated with Azure DevOps and GitHub, Azure Data Factory supports a meticulous approach to ETL process development and delivery. This ensures that data processes undergo refinement before final deployment.

The refined data smoothly integrates with advanced analytics engines like Azure Data Warehouse and Azure SQL Database. This strategic fusion ensures accessibility for business users through their preferred Business Intelligence (BI) tools. The result is a seamless data analysis environment, empowering users with actionable insights.

The platform acts as a vigilant overseer with built-in monitoring features. These features offer insights into the success metrics and potential challenges of scheduled activities and pipelines. This proactive oversight ensures the sustained health and reliability of data workflows.

Azure Data Factory takes data transformation to another level. Utilizing powerful services like HDInsight Hadoop, Azure Data Lake Analytics, and Machine Learning, it transforms raw data into actionable insights. These services play a pivotal role in elevating data from its raw state to an informed form.

The workflow commences with a trigger, specifically a new form submission within Microsoft Forms. This trigger acts as the catalyst for the entire process, signaling the initiation of the workflow.

To seamlessly integrate the trigger into the workflow, the “Microsoft Forms” connector is employed. This connector serves as the gateway, linking the workflow to the targeted form and enabling the efficient monitoring of submissions.

Once the trigger is activated, the workflow progresses into the action phase. Connectors such as “Office 365 Outlook” come into play, facilitating the automation of tasks. In this context, the action involves sending email notifications, with the content and recipient details dynamically configured based on the data submitted through the form.

The workflow gains sophistication with the introduction of conditional logic. This element allows for dynamic decision-making within the process. For example, depending on specific responses within the form, the workflow can branch into different paths, triggering varied actions or sending diverse email notifications.

For comprehensive record-keeping and future reference, the workflow offers the option to log information about each form submission. Using connectors, data is intelligently stored in a database or a designated file within Azure Storage, ensuring a robust and organized archival system.

The workflow seamlessly concludes, marking the successful execution of the automated process. Every time an individual submits the form, Azure Logic Apps organizes a series of actions: triggering the workflow, sending pre-configured email notifications, executing additional actions based on conditional logic, and, if specified, logging relevant information for future analysis and reference. This structured completion ensures a streamlined and efficient response to form submissions.

1.Versatile Data Integration : Azure Data Factory is adept at integrating diverse data sources, including on-premises databases, cloud databases, and various SaaS applications.

2. Scalability and Flexibility : It provides scalable data processing capabilities, allowing businesses to adapt to varying workloads and data volumes with ease.

3. Hybrid Cloud Support: Azure Data Factory seamlessly bridges on-premises and cloud environments, facilitating hybrid cloud scenarios for businesses with diverse infrastructure needs.

4. Comprehensive Data Transformation:The platform supports complex data transformations using services like HDInsight Hadoop, Azure Data Lake Analytics, and Machine Learning, enabling advanced data processing.

5. Operational Efficiency: With built-in monitoring features, Azure Data Factory ensures proactive oversight of scheduled activities and pipelines, enhancing operational efficiency.

6. CI/CD Support: It embraces Continuous Integration/Continuous Deployment (CI/CD) methodologies, enabling developers to refine and deploy ETL processes with precision.

7. Analytics Engine Integration and Accessibility: Refined data seamlessly integrates with analytics engines like Azure Data Warehouse and Azure SQL Database, facilitating streamlined access for business users through preferred BI tools.

8. No-Code Data Workflows: Azure Data Factory empowers non-technical users to configure data workflows without writing code. This no-code approach is ideal for businesses wanting to leverage citizen-integrators who aren’t coders.

9. Easy SSIS Migration: For businesses accustomed to using SQL Server Integration Services (SSIS), Azure Data Factory offers seamless migration with minimal effort. It natively executes SSIS packages and provides a migration wizard for a smooth transition.

In the world of handling data, having a tool that easily combines, transforms, and helps understand different types of information is crucial. Microsoft Azure Data Factory is like an advanced tool on the Azure cloud for making complex data workflows simple and connecting different sources and destinations effortlessly. This powerhouse is elevated with the prowess of Azure Data Factory Integration runtime, ensuring efficient data movement and processing across the cloud environment.

Azure Data Factory acts like a bridge between your business and various data sources, such as online services, file-sharing platforms, and other tools. This helps keep all your data in one organized place.

With Azure Data Factory, you can make detailed plans for how your data moves from one place to another. It works like a well-organized to-do list for your data. You can decide when these actions happen, whether regularly or just once, to fit your business needs.

The Copy Activity feature of Azure Data Factory takes information from your computer or the internet and puts it all in one central spot. This makes it ready for analysis and understanding.

Azure Data Factory can adapt to how you want things done. Whether you have a regular schedule for moving data or just need it to happen once, this tool can handle it all, making sure your data moves just the way you want it to.

Besides moving data, Azure Data Factory helps improve how you work with data over time. It works closely with tools like Azure DevOps and GitHub to make sure any changes or improvements in your data processes are carefully checked before they’re put into action.

After your data is all organized, it can easily join forces with other tools like Azure Data Warehouse and Azure SQL Database. This makes it simple for people in your business to use their favorite tools for understanding and using the data.

Azure Data Factory doesn’t just do the work; it keeps an eye on everything too. It has built-in features that help you see how well things are going and if there are any problems. This way, you can make sure your data tasks are always in good shape.

When working with Azure Data Factory (ADF), adhering to best practices is crucial for achieving peak performance in data workflows. Here, we break down key recommendations to streamline and enhance your ADF processes.

Organizing pipelines meticulously is foundational to smooth data integration. Employ logical grouping, consistent naming conventions, and visualization tools for easy navigation, efficient debugging, and scalable designs.

Use parameterization to accomplish dynamic value changes without compromising the fundamental architecture of the pipeline. Identifying what can change, setting up parameters, and smoothly blending them into your pipeline activities are key steps for effective parameterization.

For large datasets, opt for incremental loading to transfer only new or modified data, reducing processing time and optimizing resource utilization. Identify changes, extract and compare data, and merge or update for efficient incremental loading.

Implement robust error handling and proactive monitoring for predictive alerts, detailed logging, custom notifications, and conditional flows. This ensures minimized downtime, data integrity, and operational efficiency when facing unexpected challenges.

Prioritize security through strong authentication, encryption (at rest and in transit), and managed identities. Adhering to principles like least privilege, maintaining audit trails, and regular updates contributes to a secure data environment.

Optimize resource allocation by right-sizing resources based on pipeline needs, prioritizing critical tasks, and implementing balanced distribution. Continuous monitoring and performance tuning guarantee cost savings, enhanced performance, and scalability.

Ensure thorough testing and validation to minimize risks and instill confidence in deployment. Techniques like data profiling, defining validation rules, and automated validation processes contribute to effective testing.

Comprehensive documentation serves as a guiding compass for navigating intricate pipelines. Clear purpose statements, architecture overviews, and detailed activity descriptions, complemented by visuals like diagrams and flowcharts, enhance knowledge transfer, troubleshooting, and overall efficiency.

Azure Data Factory is a powerful solution for holistic data management because of its ability to transform, integrate, and organize a wide range of data sources with ease. From data connection to advanced analytics integration, it ensures operational flexibility and efficiency. By utilizing Azure Data Factory, businesses can streamline data processes and gain the ability to understand the intricacies of data administration, leading to enhanced operational efficiency and improved decision-making.

Content writer

INDIA : F-190, Phase 8B, Industrial Area, Sector 74,

Mohali, India

CANADA : 55 Village Center Place, Suite 307 Bldg 4287, Mississauga ON L4Z 1V9, Canada

USA :2598 E Sunrise Blvd, Fort Lauderdale,FL 33304,

United States

Founder and CEO

Chief Sales Officer